The digital economy is built on experimentation, yet few users realize how continuously they are being tested. From interfaces and algorithms to data flows and behavioral jolts, live experimentation has become a permanent feature of modern platforms. This article examines how practices once governed by strict ethical standards in medicine have quietly migrated into technology, often without consent, transparency, or accountability, raising a critical question: have users become the new guinea pigs of digital progress?

The digital economy is built on experimentation, yet few users realize how continuously they are being tested. From interfaces and algorithms to data flows and behavioral jolts, live experimentation has become a permanent feature of modern platforms. This article examines how practices once governed by strict ethical standards in medicine have quietly migrated into technology, often without consent, transparency, or accountability, raising a critical question: have users become the new guinea pigs of digital progress?

The 2020 coronavirus pandemic tested medical science and public trust simultaneously. While COVID-19 vaccines were developed rapidly through established ethical and scientific processes, conspiracy narratives also emerged, framing the virus and vaccines as planned experiments. Although the health crisis has eased and scientific progress prevailed, the skepticism and mistrust it generated continue to influence public perception.

Human history shows that progress, particularly in medicine, has always relied on experimentation. Surgical tools, pharmaceuticals, and treatment protocols evolved through trials conducted on animals and humans alike. Over time, medicine developed ethical guardrails to limit harm: consent, transparency, oversight committees, and accountability. These safeguards exist because experimentation, even when well-intended, carries inherent risk.

What remains largely unexamined is how experimentation has shifted into the digital economy without comparable ethical limits. Initially a constructive business practice, experimentation improved quality through feedback and optimization. Over time, scale replaced refinement. Today, organizations conduct continuous testing on live users who depend on digital platforms. Experimentation is no longer optional or temporary; it is a permanent condition imposed by dependency.

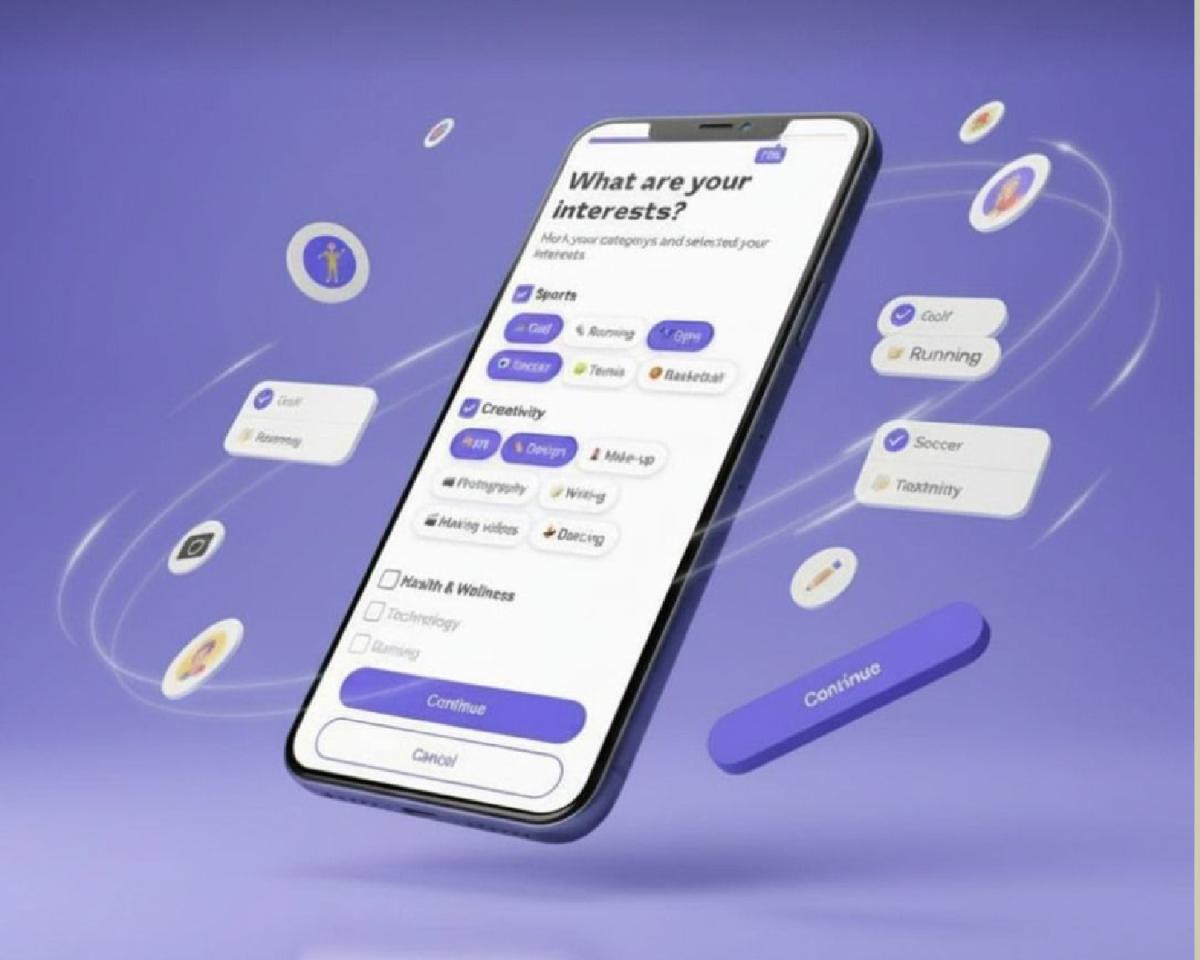

In the modern digital economy, optimization has become a governing principle. User growth, engagement metrics, and conversion rates shape product strategy, executive incentives, and company valuation. Beneath this data-driven logic lies a structural shift: the normalization of live behavioral experimentation without explicit, informed consent. Personal data is continuously collected, analyzed, and often monetized with limited transparency. Many users believe rightly or wrongly that every interaction is monitored to maximize advertiser value. This perception alone signals a breakdown in trust. When users feel constantly observed, even communication begins to feel unsafe.

This environment is enabled by modern software architecture. Cloud-based platforms and API-driven systems allow companies to modify functionality, interfaces, and data flows instantly from centralized servers. Unlike traditional software updates, these changes require no user approval or notification. Core behaviors how transactions are processed, how balances are displayed, how information is prioritized can be altered silently across different user segments. The resulting power imbalance is profound. Platforms experiment continuously, while users remain largely unaware that experimentation is taking place.

One of the most damaging consequences of this imbalance is digital gaslighting. Users encounter unexpected changes, errors, or inconsistencies and are told that nothing has changed. In low-stakes environments, this causes frustration. In financial, healthcare, or essential service platforms, it erodes confidence and increases risk. When users cannot rely on system consistency, they cannot make informed decisions. Trust, once broken, is costly to restore.

The ethical problem intensifies when experimentation occurs within essential or near-monopolistic platforms. In such cases, users are captive audiences. A banking app, messaging service, or healthcare portal cannot be abandoned without economic or social consequences. When experimentation is imposed on users who lack realistic alternatives, product testing becomes involuntary risk exposure. Failed experiments are no longer abstract learning exercises; they disrupt livelihoods, finances, and well-being.

Academic and regulatory research frameworks highlight this imbalance. Experiments involving human subjects require informed consent, risk minimization, and protections for vulnerable populations. These are non-negotiable standards. Corporate experimentation in technology, despite affecting vastly larger populations, often operates without comparable oversight. This raises a fundamental governance question: why do ethical standards weaken as scale increases?

Operational risks further compound the issue. Live testing can introduce instability, data loss, or security vulnerabilities. When systems fail, users typically bear the cost—lost data, interrupted access, financial exposure—while organizations retain the learning benefit. Without transparent rollback mechanisms, accountability, and disclosure, risk is effectively externalized onto the user base. From a governance standpoint, this represents a serious misalignment of responsibility and liability.

For boards and senior leadership, these dynamics can no longer be dismissed as technical matters. Live experimentation is not merely a product decision; it is a fiduciary issue. It directly affects data integrity, consumer trust, regulatory exposure, and brand resilience. As digital platforms increasingly function as economic and social infrastructure, the absence of formal ethical oversight constitutes a material weakness.

One structural response is the institutionalization of ethical review within product governance. Organizations should establish cross-functional experimentation review committees to evaluate proposed live tests against defined risk criteria. These reviews should assess data integrity, psychological impact, reversibility, and necessity. Not all experiments require the same scrutiny, but those affecting core functionality or vulnerable users must meet higher standards of justification and transparency.

Ethical optimization also demands operational discipline. Users should have meaningful opt-out mechanisms for non-essential experimentation. Clear thresholds must distinguish minor interface adjustments from substantive system changes. Most importantly, organizations must maintain immediate rollback capabilities and communicate honestly when experiments produce unintended consequences. Transparency is not a barrier to innovation; it is a stabilizer.

Ultimately, the long-term competitive advantage of digital firms will not be determined by how aggressively they extract behavioral data, but by how effectively they sustain trust. In an environment of rising regulation and declining switching costs, trust becomes a measurable asset earned through restraint, clarity, and respect for users. Innovation requires experimentation, but experimentation without consent, accountability, or proportionality is not progress. It is a failure of systems to govern themselves. As digital platforms continue to shape modern life, the critical question is no longer whether experimentation should occur, but under what ethical and institutional limits it must operate.